How Do CFG Scale and Denoising Strength Effect AI Image Generation in Stable Diffusion?

Stable Diffusion is a lot of little button and levers that can drastically change how your images turn out. CFG Scale and Denoising Strength are two of those controls that trip up beginners. For that reason, I want to explain what exactly these parameters do and how fine-tuning them can help you to create better AI art compositions.

Technical Notes

Before we begin, a few quick notes on my setup in case you want to follow along or you want a point of reference. For all of the output images in this article, I am using a local installation of Stable Diffusion with the following hardware and software:

- CPU: AMD Ryzen 5 3600

- RAM: 16GM

- GPU: Nvidia GeForce RTX 3060 w/ 12GB of VRAM

- Stable Diffusion interface: Automatic1111’s Web UI

- Model: v1.5 Pruned

What is CFG Scale?

First off, CFG Scale stands for “classifier-free guidance scale”. This parameter controls how closely the AI model will conform to the prompt it’s been given. In other words, this control tells the AI how much artistic freedom it has for generating the image for any given prompt.

The lower you set the CFG scale, the more your model will take creative liberties in generating an output image; the higher you set the CFG scale, the more your model will try to follow the prompt as closely as possible. Both of these extremes can turn out looking bad, and yet…both can create some really interesting effects on images that you may have otherwise never thought to create.

A very low CFG Scale setting (6 or under) can start to give you abstract and spacey art images. On the other hand, a high setting (about 10 and over) will produce images that will look more and more “overcooked” (by that, I mean images with way too much saturation and contrast—as if someone took the picture and ran it through too many Photoshop filters).

Let’s look at a few examples to see what I mean. Here is my basis prompt for Example #1:

| Prompt | Realistic photo of a park in autumn, detailed, realistic, cloudy day, 35mm lens, street photography, by Elsa Bleda |

| Sampling Steps | 30 |

| Sampling Method | DPM++ 2M Karras |

| Size | 512 x 512 pixels |

Comparing CFG Scale Changes

And here are the results I got with the same prompt at 4 different CFG Scale settings. Please note that these images were not cherry-picked; these are the first images that Stable Diffusion generated for each CFG Scale setting:

CFG Scale: 1.5 |

CFG Scale: 8 |

CFG Scale: 14 |

CFG Scale: 30 |

What observations can we make when comparing these images? The low-CFG result did not really care about my prompt requesting a photo-realistic image or the specific photography style I invoked. It looks more like a digital painting…albeit a very cool painting! The general idea is there: it’s a part, it’s autumn, it’s not sunny. On the opposite end of the spectrum, the highest CFG Scale image does not look photo-realistic either; the colors and contrast are totally blown out and overdone.

What we can learn from these tests is that the middle ground often yield better results by compromising between your idea and the AI’s idea of how the image should look. Let’s try one more test with some different subject matter and style guidance, but with this test we’ll use the same seed for all three versions to really accentuate how the CFG Scale will impact the final image. The seed originated from the middle image which I generated first; then, I locked in that seed number and just moved the CFG Scale up and down. Again, results were not cherry-picked.

Second CFG Scale Test

| Prompt | A portrait painting of a pretty woman in a field of flowers by Dean Cornwell |

| Sampling Steps | 30 |

| Sampling Method | Euler |

| Size | 512 x 512 pixels |

| Seed | 1051830805 |

|

|

|

| CFG Scale: 1.5 | CFG Scale: 8 | CFG Scale: 30 |

Again we see that the results get softer and more abstracted in aesthetic as the CFG Scale went down. Yet at those lower CFG settings, the images contained more detail. When that setting was cranked up, the image became over-saturated and took on rounder shapes. This is another big point to keep in mind: low CFG scales provide more details because the AI is given more leeway for “imaging” the details.

In my personal experience, high CFG Scales tend to ruin a composition more often than improve it. If you take too much freedom away from the AI and start backseat prompting, then the results will start looking hyper-stylized and forced. So I guess Stable Diffusion is a fan of Lady Liberty.

What is Denoising Strength?

Denoising Strength is similar to CFG scale, except it only affects img2img generations. That’s because Denoising Strength tells Stable Diffusion how strongly to bias it’s output towards your input/source image rather than the accompanying text prompt.

The lower you set this parameter, the more your output image will resemble the input. If you set it at 0.5 and below, your generation will look very close to your original image. But if you set the Denoising Strength all the way up to 1, then you’ll get an almost completely different image. Let’s illustrate this with an example:

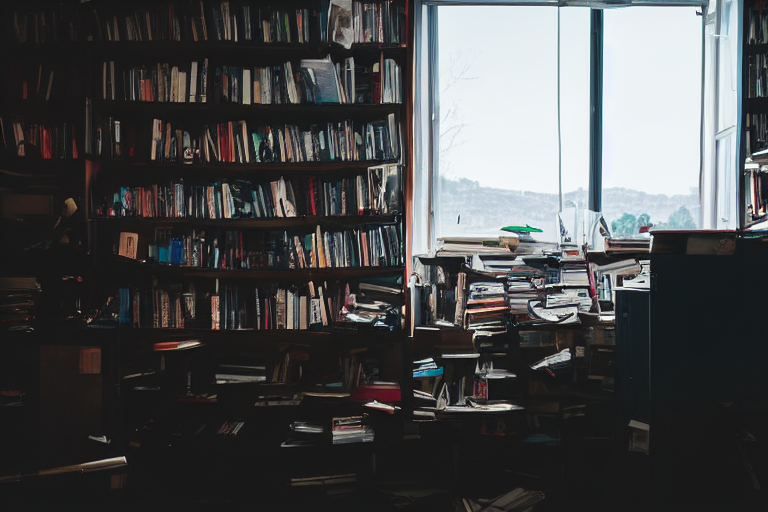

| Prompt | photo of a messy room with a lot of books and papers on the shelves and a window with a view, by Elsa Bleda |

| Sampling Steps | 20 |

| Sampling Method | DPM++ 2M Karras |

| Size | 768 x 512 pixels |

| CFG Scale | 7 |

Source Image |

Denoising Strength: 0.05 |

Denoising Strength: 0.5 |

Denoising Strength: 0.9 |

As you may notice, the final output image at 0.9 denoising still maintains a general aesthetic of the original image but diverges distinctly in subject matter and even perspective. While the original image features binders on the shelf, the final output image steered clearly towards the prompt’s mention of “books and papers”.

Best Practices for Improving Image Results

In conclusion, CFG Scale and Denoising Strength control how closely your images conform to the prompt (CFG Scale) or your source image (Denoising Strength). Let’s wrap it up by reviewing some tips and tricks that you can test out in your own image generations:

- In general, the optimum CFG Scale for distinguishable but well-constructed results is between 7-10, though I’ve gotten some very nice images as low as 5 that still retained prompt essence.

- The sweet spot for CFG Scale settings will vary based on your prompt’s subject matter and style. Landscapes, portraits, photorealism, paintings, still life, people.

- Low CFG Scale will add greater detail to images than high CFG numbers will.

- If you are struggling to get the result you want from a prompt, don’t keep cranking up the CFG Scale thinking the AI model will start “listening” to your more. Instead, try rewriting the prompt (Stable Diffusion may not have training data on what you’re asking). Another great alternative is to do a rough sketch of the image you want and use Img2img on it.

- Denoising strength is an excellent way to tweak an output image that’s almost got what you want but needs a few minor adjustments. Send your txt2img art over to the img2img panel and append the text prompt to fix what you don’t like in the image; then experiment with various but low denoising numbers.

- Denoising is also great if you like the style of an image/art piece and you want to emulate it with different subject matter. For this situation, you’ll want to use higher denoising to let your prompt shine through without losing the underlying structure, coloring, or aesthetic of the source image.

Recommended Reading

Thanks for stopping by. If you found this article useful, here are a few more you may like: